Lots of monopoly-related news, as usual. Last week, Trump antitrust chief Gail Slater was fired in a major shake-up among enforcers, the Texas Attorney General brought an antitrust case against fire truck makers, and Pam Bondi told Congress that the Epstein Files are a distraction from the stock market. Plus a lot more.

But before getting to the full news round-up, I want to focus on something odd I saw over the past week. A bunch of different elites started making more aggressive claims that artificial intelligence is now some sort of super-intelligent singularity. It’s weird, and I would normally discount these arguments as not worth paying attention to, except that they seem to be capturing the minds of major financiers and even lefty Senators like Bernie Sanders. And I think there’s something problematic going on here.

Why Are They Saying We’ve Invented God?

Let’s start with the arguments. There are a number of them, and they go into a few categories.

First, certain executives are saying, with caveats, that they are creating super-intelligent and almost God-like forces. The main proponent of this line of argument is Anthropic CEO Dario Amodei, who claimed in a podcast this week “we are nearing the exponential” and are about to become “a country of geniuses in a data center.” In his opinion, AI will bring in trillions of dollars by 2029, and the economy will be growing at between 10-20% a year. If you extrapolate his view of energy usage, by 2032 global data center electricity demand would be greater than the world's entire electricity production.

To manage this new force with God-like abilities, Amodei is calling for regulation around “AI safety.” His firm has hired an ethicist to write a “Constitution” for its product, and it attempting to teach it right from wrong.

As if to show the importance of Amodei’s concern about the power of his Godlike modeling, the Wall Street Journal just wrote a story about how Anthropic’s tools were used in the invasion of Venezuela. There are no details about how AI was used. And without any context, it’s impossible to make any conclusions about anything. But it sure seems fancy and terribly important.

Now, Amodei doesn’t want his all-powerful tools used in warfare or in domestic surveillance, and he and the Pentagon are in a major tiff over whether they can be used. This deep moral quandary is meant to underscore that there are real stakes here, even though we have no idea what they are or whether it’s just bullshit artists trying to raise new investor capital. Anthropic, coincidentally, just closed a $30 billion funding round.

Second, a number of business leaders and commenters are saying that all economic and cultural activity is shortly going to be completely transformed by this force they have unleashed. Google’s CEO is now broadcasting on 60 Minutes that its AI is so advanced it is learning skills that the company doesn’t intend for it to have. Wow!

Meanwhile Microsoft’s head of AI, Mustafa Suleyman, predicted that “white-collar work, where you’re sitting down at a computer, either being a lawyer or an accountant or a project manager or a marketing person — most of those tasks will be fully automated by an AI within the next 12 to 18 months.” Again, wow! Now, Suleyman also contradicted himself in that same interview, but don’t let that concern you.

On the economic front, it’s now routine for policymakers to tout productivity numbers coming from AI. For instance, Erik Brynjolfsson, an economist and AI consultant wrote in the Financial Times, that the “productivity” takeoff from AI is finally here. His evidence is that GDP growth was up, even though the government revised downward the number of jobs created last year.

There’s also a cultural argument. The New York Times published an extremely weird article titled “‘We’re All Polyamorous Now. It’s You, Me and the A.I.’” The author, Amelia Miller, is also an investor in AI. And she just got a “master’s degree at the Oxford Internet Institute, where she studied human-A.I. relationships,” which is apparently a thing. She seems to believe that developers can help humans manage intimacy with machines more effectively.

There’s a lot more hype.

There’s also a full-on bullying effort towards anyone who doesn’t buy these extraordinary claims. A group of writers loosely associated with Abundance and the ethical altruism cult seem to be the tip of the spear. Abundance co-author Derek Thompson demanded to know why any journalist might doubt the “spooky and unnerving and powerful” AI tools that have been recently unveiled. Economics commentator Noah Smith wrote a piece titled “You are no longer the smartest type of thing on Earth” in which he says that a sort of sentient AI is inevitably taking over, that “We will be well-cared-for pets.” The Argument’s Kelsey Piper demanded those who don’t believe that God is here should download Clause Code and play with it.

There’s a lot more, but that’s the gist of what I’ve noticed over the past week. Even though these are all different people making different claims, something about this whole approach feels like a product roll-out.

And it’s sort of working. One character I watch in financial markets is Mohamed El-Erian, a former CEO of bond giant Pimco, frequent guest on CNBC, and a writer for the Financial Times. El-Erian is a social climber who likes to stay within the elite consensus, and in his most recent column wrote that “this time could really be different on jobs.” Even Bernie Sanders has been convinced. He’s heading to Silicon Valley, and saying “there is now a legitimate fear that artificial general intelligence will not only become smarter than human beings, they’ll be able to communicate with each other independent of humanity.”

Most people find this AI discourse weird and annoying, because as Jon Stokes notes, it’s done by people disconnected from actual work.

When I read comments on various AI-focused articles, the usual response is irritation. There’s a reason for that. This roll-out of an inevitable technology above politics is being used to disguise mass theft.

No, You Haven’t Invented God

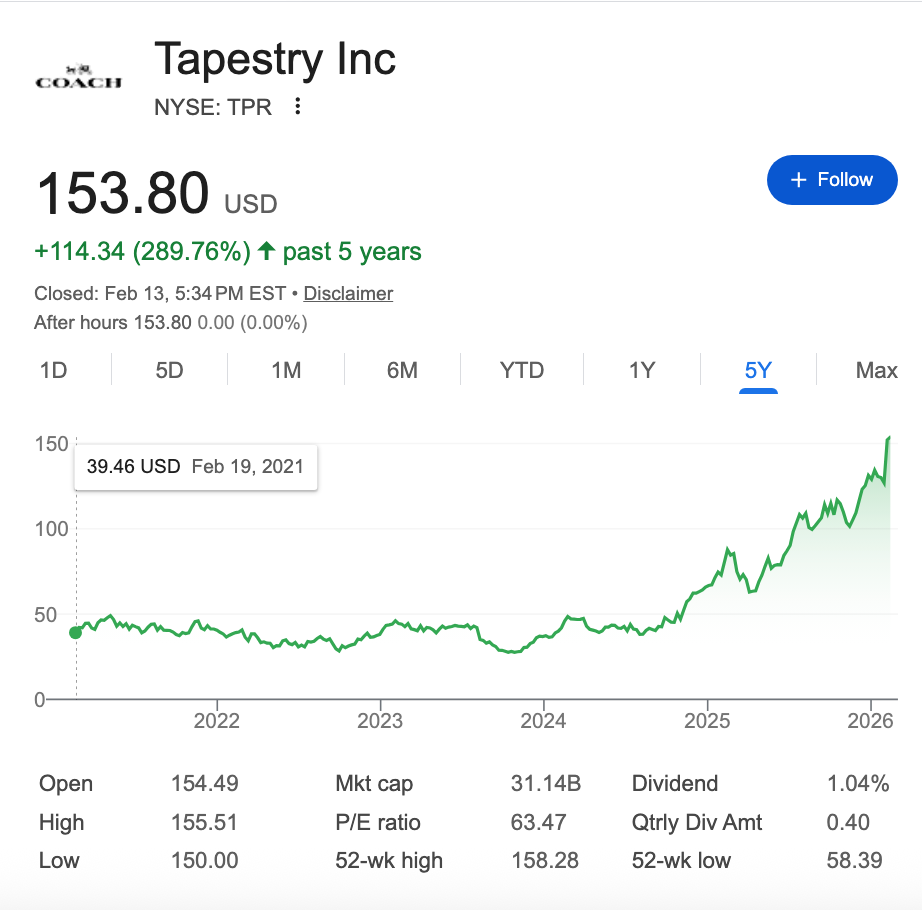

Let’s start with a basic question. Are these claims true? Obviously not. If most white collar jobs were going to be eliminated in the next year, Wall Street would be acting very differently. We don’t know which companies would benefit from the elimination of the white collar workforce, but we could expect widespread short-selling of a company like Tapestry, which owns luxury accessory producers Coach and Kate Spade. That’s the stuff that the white collar workers wouldn’t have the money to buy if their jobs go away. Is that happening? Not remotely. Tapestry is at a record high.

Moreover, the AI companies themselves aren’t acting like they’ve invented God. Anthropic is spending tens of millions of dollars to support Republican Senators who help their company, which wouldn’t be necessary if they had actually “gone exponential.” It’s likely that hundreds of millions, or even billions, are going to flood into politics from AI oligarchs, to protect their power. That makes no sense, unless this build-out isn’t inevitable.

I can’t predict the future, but Fredde DeBoer has the right take about these claims. He cited Carl Sagan’s quote that “extraordinary claims require extraordinary evidence.” And in this case, it should. These people are demanding the use of our water, electricity, data, money and attention, based on their fantastical stories. They should have to prove that this stuff is going to happen before taking our stuff, we shouldn’t have to disprove it to prevent them from stealing it.

I also doubt very much that white collar workers will be ‘replaced’ by AI. Cory Doctorow points out that AI can be very useful. It can, for instance, help radiologists catch tumors they would otherwise miss. But it’ll mostly be used to justify firing radiologists so hospitals can save money. That will mean hospitals will earn more, but more people will die of cancer. So in short, there might be mass firings of white collar workers, but it will just result in work not getting done. And that is common in corporate America. AI isn’t really ‘replacing’ customer service agents, it’s just making customer service worse.

Why The Marketing Push?

So why push this set of stories now? I can think of a number of reasons. Wall Street has gone wobbly on the data center build-out, but if you’ve invented God or the Devil, that’s a great investment thesis. But another more important reason is to avoid the obviously necessary regulation that a democracy needs to manage this kind of innovation.

AI is a useful general purpose technology, and that’s a big deal. But it’s not sentient and it’s not going to cause the U.S. economy to grow at ten times its normal speed. There’s an attempt to say that if you don’t accept extreme claims that everything is totally different now, it means you don’t believe AI matters at all. In fact, I’m certain that someone is going to cite this article and call me an “AI skeptic” just because I don’t believe that God is living in the servers of Anthropic. They want us to have a religious argument. Why?

The reason is that if you just see AI as a general purpose technology, then politics, which means our ability to come together through legal institutions and form a society, matters. This week, for instance, Chinese firm ByteDance released Seedance, an AI engine that can produce hyper-realistic film scenes. Here’s a clip it generated of Tom Cruise and Brad Pitt fighting.

Generative AI will change how we make and sell video, perhaps in profound ways. It is also enraging people whose likeness is being stolen to make unauthorized content. Immediately, Hollywood studios sued, as they should. And ByteDance changed its product to prevent systemic copyright violations.

And that’s… politics. That’s good. It’s what should happen. Tom Cruise has done a lot of work making beautiful stories, he should have some control over his personhood. But what you are now seeing from AI proponents is vicious mockery, with the gist of their claim being "Don’t you know your work being devalued is inevitable?” It’s an attempt to convince us there’s nothing we can do but submit.

The dispute over Seedance is the whole fight in a nutshell. If AI changes everything, as Amodei claims, then old questions, like who owns what, don’t matter. But if AI is just another important technology, then how it gets deployed and for whose benefit does matter.

For instance, financial analyst Herb Greenberg looked at the effects of AI in health care and found that, surprise surprise, it is used by hospitals and private equity-owned provider practices to cheat and raise prices. We already know that generative AI is great at price-fixing, and now we’re seeing it’s being used to do that.

Here’s an executive at a company called Insperity, which does HR services for small businesses, making that point.

We have recently been made aware that the use of AI tools by health care providers has emerged as an additional contributor to the higher cost trends, impacting everything from condition diagnosis and treatment plans to clinical documentation and coding for insurance billings to preauthorization and appeals processing.

To translate, AI is very good at lying to insurance companies and the government to squeeze out more money for private equity. Now, no normal person is surprised that a clear use case of AI is price-fixing to hike health care costs. But that’s also why most Americans have tuned out the elite conversation about AI. It’s not just annoying, it’s besides the point.

AI Agents Are Public Utilities

If you push the juvenile theological arguments to the side, there are very interesting political questions around machine learning and AI. The fundamental one is whether the companies who build AI tools should make money by helping their customers or manipulating them.

AI is good at certain things, like legal document review, where human beings are modeling and then verifying the output. It is terrible at producing reliable products. And that’s not going to get better, AI algorithms are big guessing machines, they do not “know” anything and cannot understand truth. That’s why attempts to replace, as opposed to supplement, white collar workers doesn’t strike me as likely.

Still, the ability to record and reuse the context and data across our entire society may be magical. If a trusted AI agent had access to your bank account, email, social media, location data, calendaring, home devices, purchases, medical history, search history, and workflow, maybe also a constant video stream of what you see, then weird stuff becomes possible. Apps and user interfaces disappear, and you could set your “agent” or set of “agents” to manage your life or the life of your family in a variety of customizable ways. To give you a small example of how I would use it, I might have an agent watch a bunch of different YouTube channels, and pinging me when someone discusses a monopoly.

Of course, there are many ways this kind of technology can and will be used and misused. Right now, in Silicon Valley, techies are obsessed with an open source tool called OpenClaw, which is the first draft of this personal agent technology. The product is massively insecure and most use cases seem kind of dumb to me, but it’s a notable development. OpenAI hired the creator of OpenClaw, and you can be sure that every big AI company is learning from its deployment.

Taking a step back, every AI chatbot is in a sense a kind of agent, perhaps less sophisticated or capable than OpenClaw, but using the data and context or our chats to offer increasingly personalized service. Creating agents that are accessing our information and using it is a highly political endeavor. Recently, OpenAI made a decision to finance its product with advertising, drawing mockery in Super Bowl commercials. But the joke is deadly serious; if an agent is advertising to us, is it really operating on our behalf? Or is it a conflict of interest?

A few weeks ago, I wrote a piece about how Google is seeking to become the central planner of the economy. It’s always been corrupt to have a search engine funded by advertising, from the very beginning. But after Judge Amit Mehta ruled badly on the search antitrust suit, Google is trying to build out an entire “agentic economy” based on that same strategy. It rolled up personal data, distribution of chatbots, and built partnerships with Apple, as well as virtually every retailer and payment network, in an attempt set pricing across the economy.

When you login to Gmail, Google is now summarizing your email. When you search, Google is summarizing the web. These are prototypes of agents, and they will improve. But are they operating on your behalf? On behalf of the advertisers who pay Google? Or on Google’s behalf? AI is leading to significant changes in search, email, social networking, app stores, advertising, and so forth, Google will have the data and context to create a chokepoint that is even more dominant than the one it has today.

That is, unless we act. One obvious solution is to make sure that all agents have a clear fiduciary obligation to the person who deploys them. To force that, we would have to ban all advertising and all payments to finance agents except fees from the ultimate client. This policy would kill many of the bad incentives that Google has to manipulate pricing and the flow of commerce across the economy, and turn the company into a public utility. Weaker but still useful measures might include banning surveillance pricing or dynamic pricing, or other forms of price discrimination.

There are similar elements when it comes to Anthropic, which is building out market power by focusing on working within regulated spaces. And a host of Chinese AI models are now trying to capture the agent space, since their AI models are generally more efficient than America’s bloated approaches because Chinese firms don’t make money selling cloud computing services. Regardless of who is providing the agent, a regulatory model forcing agents to be on the side of the citizen is very different than one which isn’t. And that’s especially true if Google becomes the full provider of all commercial context and data, or if the Chinese come to dominate in key spheres.

A world where you are manipulated by the company providing you with a window into the world is very different than one where you are just paying for honest services. And that’s what the cultish weirdos are bullying us to avoid thinking about.

And now, the rest of the round-up after the paywall. There are a bunch of interesting stories. The FICO credit scoring monopoly seems to be ebbing, the Epstein Class becomes a political term, AOC went to Europe and said monopolies are a threat to national security and Western democracies, and a private equity company that has rolled up fonts - yes fonts - is starting to squeeze customers. Oh, and Meta just got a patent on how to ‘simulate’ someone posting on social media after they’ve died. So that’s nice.

Read on for more.